Docker Log Plugin

A quest I took at 2 November, making my own docker log plugin. Suitable to share with everyone.

At the office, we are mixing docker-only hosts, with on-premise kubernetes clusters. For example our databases do run better / more stable on plain docker hosts, with no kubernetes intervention. That’s mainly due to the sub-optimal distributed storage in our on-premise cluster.

But… we do want to send all of the logs from both kubernetes and docker hosts to a central logging system (ELK stack). For kubernetes we use fluent-bit, with a filter which add’s kubernetes metadata. Such as the container name.

So what could we use for the docker-only hosts? Fluent-bit can be coupled with docker in a way that the docker daemon does send the logs directly from the container to the fluent-bit process. This will probably work OK. But… what you lose is the possibility to run “docker logs -f …”. And if the fluent-bit or elastic storage has issues, you loose the logs completely.

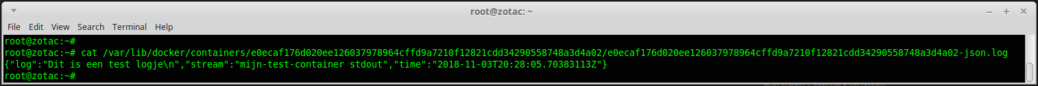

The other option therefore would be to just get fluent-bit (or a simple filebeat job) to send the /var/lib/container/*/*.log files as-is to the elastic storage. In that case you would keep the ability to use the “docker logs” command, and if something breaks, it keeps the logs and tries sending them again later. The most important thing we are missing in this case, is the CONTAINER-NAME’s of the running docker instances. These names are not in the log-file-name and not in the file content, so we need another way of tagging the data.

Comes in the docker-log-plugin, to extend the contents of the docker log files.

I did take the standard log plugin example. Which was quite a challenge, as at the time of this writing, there was NO description on how to build and use it. Even worse, the example was not even compiling, and had some nasty bugs.

The standard example wraps the json logging module. So to get the container name in the output, I opted for modifying one of the existing fields in the output. The “stream” field normally contains something like “stdout” to indicate the log line was send to standard out by the job running in your container. Using my plugin, you can change the contents of that field to contain the container-name, a space, and then the original content (stdout).

Now you can filter in the ELK stack (in kibana) on the “stream” field to identify the logs for your application.

Note: we did not yet start using this solution… It was merely a nice bit of investigation for me, to keep at hand as possible solution. We probably will go with the out-of-the-box docker to fluent-bit, having all of it’s disadvantages. But you never know. We might switch to using this log-plugin.

Here’s the link to github: https://github.com/atkaper/docker-log-driver-test

Thijs.