Micro-services Architecture with Oauth2 and JWT – Part 2 – Gateway

The last number of years I have been working in the area of migrating from legacy monolith (web) applications to a (micro) service oriented architecture (in my role of Java / DevOps / Infrastructure engineer). As this is too big of a subject to put in a single blog post, I will split this in 6 parts; (1) Overview, (2) Gateway, (3) Identity Provider, (4) Oauth2/Scopes, (5) Migrating from Legacy, (6) Oauth2 and Web.

(Note: This article has been updated a bit on the 17th of January 2020 – added Gloo as gateway candidate — And now end of December 2020 we migrated to Spring Cloud Gateway).

Introduction

So what is this gateway? What is it’s role / purpose?

We are talking about a micro-services landscape, where we have many small services (applications). To build a big application, you need to be able to call many of those services, but perhaps not all of them.

That’s where the gateway comes in. It manages the proper routing to the requested service. Not just where to find it, and how to get there, but it also makes sure you are actually allowed to get there. It makes sure you went through (Oauth2) authentication, and uses that for checking the authorizations.

As all (services) traffic will go through it, the Gateway must be a high performance and secure component. For this we were using a (java) Netflix library called Zuul, together with spring-boot. This is actually a surprisingly small piece of code (at least our custom glue code, to connect the proper libraries), which performed quite good. Now since December 2020, we switched to use Spring Cloud Gateway (fully asynchronous, and a bit more stable than ZUUL was!).

We started using Zuul because it seemed simple to implement, and as Netflix handles high-volume traffic we thought we would be OK with this. And in the last years this has proven to be an excellent choice! (But… the version was no longer supported, so we moved to Spring Cloud Gateway – very similar, and really good also! Better even…)

The Parts

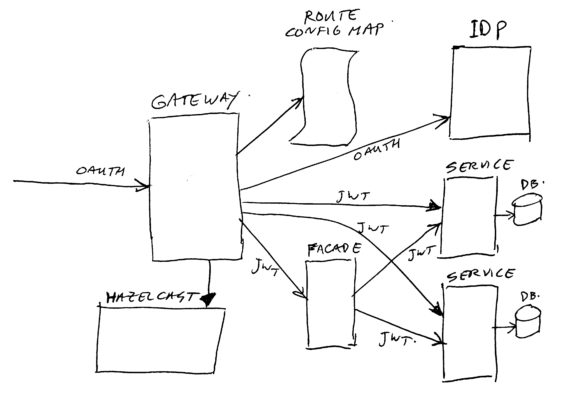

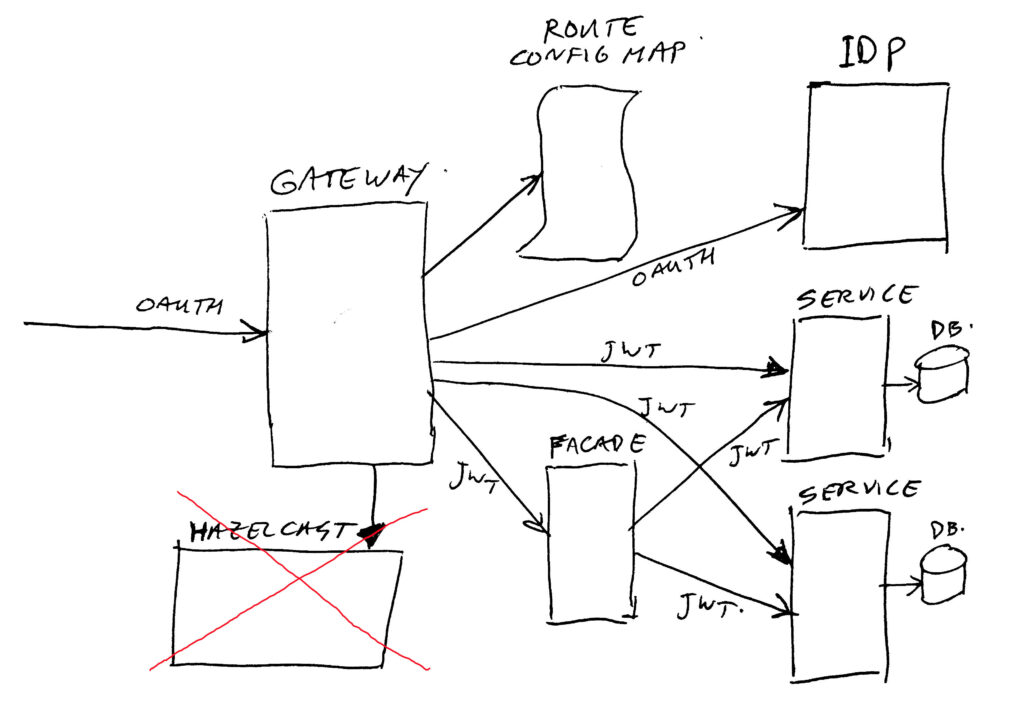

So let’s take a look at the gateway and it’s surrounding components:

Gateway

The Zuul Spring Cloud Gateway traffic router, which is nothing more than a (good) small reverse proxy / routing framework.

Luckily it is easy to extend it’s functionality, which was needed by us. See chapter on customization’s for all the neat extra’s we have put in there to make it a suitable gatekeeper for us.

Route Config Map

The configuration source containing all routing data, letting Zuul Spring Cloud Gatewayknow what to do. A route consists of things like a source context path, a destination service path, and some security attributes. Standard Zuul did not support many attributes (mainly source and destination). We have extended this with the security parts. Our new Spring Cloud Gateway is more flexible in extending route attributes.

In our case, we store the route’s in a so called Kubernetes “Config Map” object. This is a piece of data which can be edited easily at any time. The map is “mounted” as volume with the config YAML file in the gateway deployment. The gateway has been set up to reload the map if it changes. This allows us to add new routes on-the-fly without having to take the gateway down for a fresh deployment.

If you are not running in Kubernetes, you can also try using the standard spring-cloud configuration server for storing this data. The Spring-cloud configuration server uses GIT as back-end for getting your configuration data from.

Identity Provider (IDP)

The IDP is the component which handles Oauth2 protocols, checks user credentials, manages Oauth2 tokens, and creates JWT’s (Json Web Tokens) for use by our services.

The Identity Provider (IDP) is important and big enough that it will get its own article/post.

Hazelcast

One of our customization’s (see the later chapter on that), is rate limiting.

When required, we can enable a rate limit per connected client. But as we are running 8 instances of the gateway, with random (on average equal distribution) load-balancing to them, we needed a collective (central) piece of memory to keep a count of the actual rate in use. For this we use a Hazelcast cluster (running 3 nodes), which is a distributed key/value cache system.

(Addtion December 2020; we have migrated away from ZUUL, and in the process we did not keep the hazelcast rate limiter. We will do that using ISTIO (Service Mesh) or Akamai (cloud protection)).

Services / Facades

I assume you know what a Service is… And the Facade is (just like a service) a small piece of code implementing one or more rest endpoints. The main difference is that a Service is regarded as the bottom layer in our system, doing database access where needed. And the Facade is used to combine multiple Services into one rest endpoint, and transform the data to a suitable format for this combination.

A real life example would be having an order service, and a product service. The order service only knows about order lines, with product keys and the product-count in them. The product service knows all about the products. In front of these two services, we put a Facade which combines the two services into a shopping cart or complete order detail list. But you can also just call the product service without going through a facade, if you just need to know some product details.

The Customization’s

As mentioned before, Zuul Spring Cloud Gateway has not much functionality in it self. So we have added several functions to support our use-cases / architecture:

(1) Exchanging the Oauth2 access token for a JWT (Json Web Token). We do check validity of the access token for every call (by asking the IDP). If the token is valid, we retrieve the user context and put it in a JWT. If it is invalid, we pass on a 401 authentication response to the caller. The JWT is signed for validity checking in the services, it contains “scopes”, a customer-number (if available), and some more attributes. Note: the JWT is calculated/constructed by the IDP.

(2) Scheduled re-loading of context path to service route mappings. The routes are read from a Kubernetes config map. This allows us to change the routes on-the-fly, without downtime or deployment.

(3) Custom additional security on the route mappings. We define which Oauth2 scopes are needed to be able to reach a service endpoint. If no access, we send back an error 403 to the caller. The scopes for the caller are read from the JWT, and compared with the scopes as registered per route. In many cases, the called services will also check the passed in scopes for finer grained access control.

(4) Rate limiting. When too many requests are done for certain cases, we respond with error 429. After a while, the caller may try again. For this we use Hazelcast as distributed cache to make sure all gateway instances have the same view on the rate limit status and counts. (Removed end of 2020).

(5) Adding Cross-Origin Resource Sharing (CORS) headers. These are needed when calling a REST endpoint directly from a web browser (using an Ajax/JavaScript call).

(6) Log correlation. Each request gets a random-id, and that ID is passed on to the chain of services (as extra request and response headers), and written as log attribute on all log messages. This way you can correlate different logs of different services to a specific initiating request. This greatly helps issue solving.

(7) Support for routing to some old legacy services, which do not handle a JWT, but need a basic-authentication header to allow access.

(8) Prometheus metrics. Prometheus is a metrics collection system. It polls for standard and custom counters in the services and other deployments. This allows us to draw useful graphs and handle alerts using Grafana and Prometheus.

(9) A small but important one: a custom route prioritizer. We have some overlapping routes. So we had to implement a way of giving the route path’s with the most slashes (/) in them precedence over the overlapping ones with less /-es in them. An example: /abc/v2/* can map to service B, and /abc/* can map to service A. Without the prioritizer, the traffic for v2 could end up in service A instead of B. With prioritizer it nicely ends up in B as required.

There are some more small custom things in our gateway, but these are too specific to mention here (having to do with caching / performance / status checks). The most important ones are listed above already.

Closing Thoughts

Earlier I mentioned that we are “currently” using Zuul. What I meant by that is that we are investigating a replacement. The new version of Zuul can not be combined with spring-boot (or the other way around), and we do need to keep our libraries up-to-date. We like to keep spring-boot, so (sadly) Zuul will have to go… (And… it’s gone since December 2020).

We have looked at some alternatives, both Java and non-Java. There are two products left on our shortlist, and one of these is the spring-cloud-gateway which seems to be quite similar to Zuul. The other one was Ambassador (new insights: instead of Ambassador we will look at “Gloo” first), and is more integrated with Kubernetes, but not Java. The choice has not been made yet. (Addition October 2020; we did choose spring-cloud-gateway).

The bigger packages (WSO2, Mulesoft, Gravitee) and other non-Java packages (Tyk, ApiGee) have been dropped as not suitable for our use-case (this does not mean they are not OK, they just did not fit our use-case in the best way).

Of these packages, four of them have been tested by a colleague. Our old/current Zuul running with 8 instances, and the others with matching scale. As dummy back-end a Spring Boot service (multiple instances) which sends “pong” as reply to a “ping” HTTP get request.

These were the four tested ones: Zuul, Gravitee, Spring Cloud Gateway, and Ambassador. Average call time for calling the ping service: 20 ms for three of them, and for Gravitee 13 ms (but no clue if Gravitee was doing some caching perhaps?). So response times quite similar for all of them. We ran this with an average load of 1000 concurrent users, executing 100 requests per second in total. For this we use the suite of Perfana, Gatling, Grafana, which works really good. Small note: Zuul did have all of our custom filters and changes, the others were plain / out-of-the-box with nothing extra.

Apart from this gateway replacement, a side note: we might replace Hazelcast (used for rate limiting) with a PostgreSQL database,or with a clustered MongoDB. In a limited scale, Hazelcast performs quite well. But it does not have any nice statistics and monitor options out of the box, to be send to Prometheus. That makes it hard to monitor Hazelcast, and predict when we would need to scale it up, or tune it’s resource limits. I think PostgreSQL or MongoDB will be easier to monitor, and have proven to work good on a larger scale.

Addtion: We threw out hazelcast rate limiting. We will do that with ISTIO or Akamai, as mentioned elsewhere in this page…

That’s it for part 2 of the series.

Thijs.

All parts of this series:

- Part 1 – Overview

- Part 2 – Gateway

- Part 3 – IDP

- Part 4 – Oauth2/Scopes

- Part 5 – From Legacy Monolith to Services

- Part 6 – Oauth2 and Web